vSAN: The “Lifesaver” Solution for Expensive Shared Storage

Previously, to run advanced features like vMotion, HA, or DRS, you were forced to purchase dedicated storage arrays (SAN/NAS) from brands like Dell EMC or HP. These systems often cost a fortune. Managing complex FC cabling was also a major hurdle for system administrators.

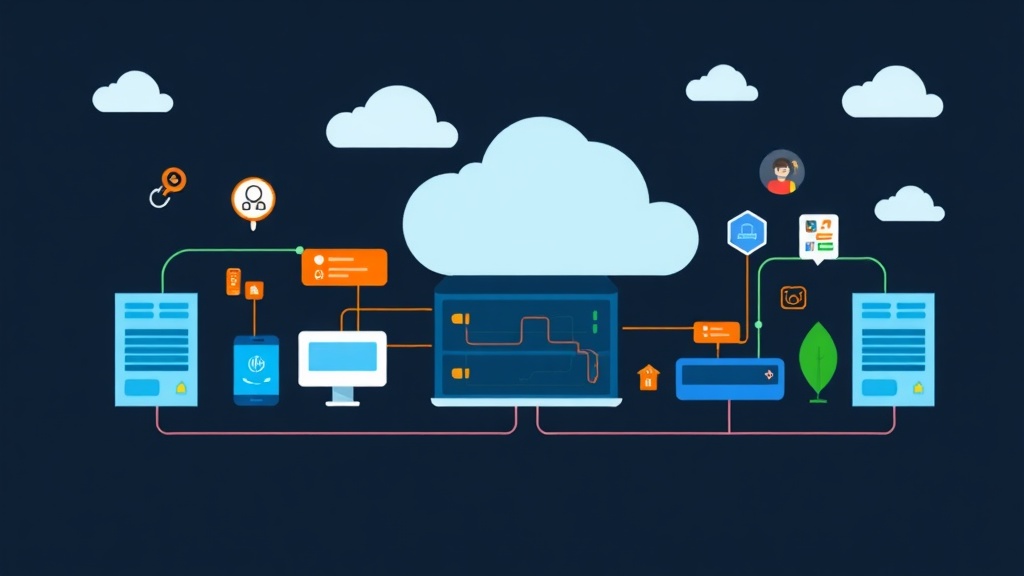

VMware vSAN emerged to change the game with Software-Defined Storage (SDS) technology. Instead of buying separate storage arrays, you can leverage hard drives (SSDs, HDDs) directly attached to ESXi servers. vSAN pools them all into a single storage partition for all virtual machines. Real-world deployments show that vSAN helps reduce CAPEX by up to 40%. Scaling is also extremely flexible: simply plug in more drives or add more Hosts to increase capacity instantly.

Prerequisites: Don’t Let the “Purple Screen” Pay a Visit

Don’t rush into enabling vSAN if your system doesn’t meet the standards. Mistakes at this stage can easily lead to data loss or system crashes (PSOD):

- Host Count: Minimum 3 ESXi Hosts for data safety. While a 2-Host + 1 Witness model can run, a 3-Host setup is the standard configuration for optimal self-healing.

- Drives: Each Host needs at least one SSD for the Cache Tier (NVMe or SAS SSDs with DWPD > 3 are recommended) and at least one drive for the Capacity Tier.

- Network Bandwidth: Minimum 1Gbps, but 10Gbps is recommended. For 10Gbps networks, configure Jumbo Frames (MTU 9000) to optimize synchronization speeds.

- Controller: The RAID card must support Pass-through (JBOD) mode. If not available, you must configure RAID 0 for each individual drive, though this method is not recommended.

Steps to Configure vSAN on vCenter

Step 1: Setting up VMkernel Networking

vSAN data is constantly exchanged between Hosts. If you share the Management network without VLAN isolation, you will face severe performance bottlenecks.

- In vCenter, select the ESXi Host -> Configure -> Networking -> VMkernel adapters.

- Click Add Networking -> VMkernel Network Adapter.

- Select an existing Switch. Under Enabled services, check the vSAN box.

Repeat this process on all Hosts in the Cluster to ensure seamless connectivity.

Step 2: Activating the vSAN Cluster

After preparing the network infrastructure, we will activate the centralized storage feature.

- Right-click the Cluster -> Configure -> vSAN -> Services.

- Select Configure and define the configuration type (usually Single Site Cluster).

- vSAN will automatically scan for available drives across all Hosts in preparation for the Claiming step.

Step 3: Assigning Drive Roles (Disk Claiming)

This is the stage that determines the system’s read/write speed. You need to clearly assign roles for each type of drive.

- Cache Tier: Select the SSD with the highest speed and best endurance.

- Capacity Tier: Select the remaining drives for VM data storage.

If you want to quickly check the list of available drives via PowerCLI, you can use the following script:

# Connect to vCenter

Connect-VIServer -Server 192.168.1.10

# List unused local disks

Get-VMHost | Get-VMHostDisk | Where-Object {$_.IsLocal -and $_.ExtensionData.RuntimeName -notlike "*mpx*"}Monitoring the System with Skyline Health

Finishing the configuration doesn’t mean the job is done. Access the vSAN Skyline Health section immediately. This tool will alert you if RAID card firmware is outdated, MTU is inconsistent, or if a drive shows signs of imminent failure. Never ignore the red warnings here.

Optimizing Storage Policies

vSAN allows for flexible management at the VM level. You can set a Database VM to run RAID 1 (Mirroring) for absolute safety, while a Test VM runs RAID 0 to save space. Adjust the Failures to Tolerate (FTT) parameter in the VM Storage Policies section. For a 3-Host Cluster, FTT=1 is the most standard configuration.

Real-world Experience: 3 Mistakes That Can “Crash” Your Cluster

Here are some hard-earned lessons I’ve gathered from various projects:

- Using Consumer SSDs: Never use Samsung EVO or other consumer-grade SSDs for the Cache Tier. vSAN’s write intensity will kill these drives in just a few months. Invest in Enterprise drives.

- Time Drift (NTP): vSAN is extremely sensitive to time latency. If the time between Hosts drifts by more than 60 seconds, the Cluster will experience a Network Partition.

- Forgetting Unicast/Multicast: Since vSAN 6.6, Unicast is used by default. However, if you are maintaining older systems, ensure the physical Switch has IGMP Snooping enabled to support Multicast.

To quickly check the status from the command line, SSH into ESXi and type:

esxcli vsan cluster getIf the result returns a status of “Master” or “Agent” and the Local Node State is “Normal,” congratulations—you have successfully configured it.

Building vSAN isn’t too difficult if you master the rules of hardware and network infrastructure. Once running stably, vSAN provides a level of flexibility that traditional SAN systems struggle to match.