The Problem: When a Single Network Cable Becomes a Bottleneck

About six months ago, I managed a database cluster for an e-commerce platform with a traffic load of approximately 10,000 concurrent users. During peak flash sale hours, Grafana went bright red: the eth0 interface hit 950Mbps, nearly maxing out the 1Gbps card. As a result, latency skyrocketed, periodic backups hung, and transactions started reporting timeout errors.

Upgrading to a 10Gbps card at the time was impossible because the old Switch infrastructure lacked SFP+ ports. If I just added more network cards and assigned different IPs, I would have had to modify the application code to balance the load manually, which is extremely complex. Not to mention, if that single cable had a loose RJ45 connector, the entire server would lose connectivity immediately.

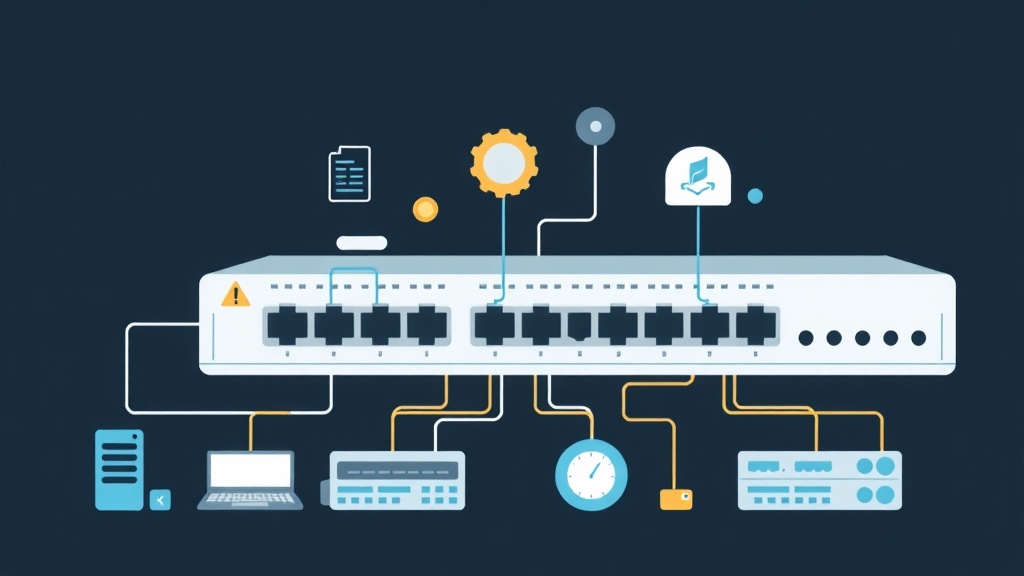

Why LACP (802.3ad) is the Lifesaver

Linux supports various Bonding modes (grouping network cards). However, if you need both performance and stability, LACP (Mode 4) is the top choice. It allows aggregating two 1Gbps cards into a single 2Gbps logical link and provides automatic failover in case of a failure.

Unlike Mode 0, which often causes out-of-order packet errors, or Mode 1, which only uses a single link, Mode 4 performs an intelligent “handshake” between the Server and the Switch. If one link encounters a problem, the protocol removes it from the group within milliseconds without interrupting the user session.

Important Note: LACP requires Switch support. Do not attempt to configure it on the Server and plug it into a cheap Unmanaged Switch; your system will not function.

Quick Comparison of Common Bonding Modes

- Active-Backup (Mode 1): High safety but wasteful. You have 2 cards, but bandwidth is still limited to 1Gbps.

- Round-robin (Mode 0): Aggregates bandwidth but is picky about the Switch and easily corrupts TCP packets due to out-of-order delivery.

- Adaptive Load Balancing (Mode 6): Works with standard switches, but stability cannot compare to standard LACP.

Deploying LACP Properly on Linux

Here is the approach I applied to a production system that has run stably for the past 6 months. You need to configure both the network device and the server simultaneously.

1. Configuration on the Switch (Cisco Example)

You must first group the physical ports into a Port-Channel. For example, to aggregate ports Gi0/1 and Gi0/2:

interface Range GigabitEthernet0/1 - 2

channel-group 1 mode active

channel-protocol lacpThe mode active ensures the Switch actively sends LACP packets to maintain the connection.

2. Configuring with nmcli (RHEL/CentOS/AlmaLinux/Debian)

Suppose you have 2 interfaces eth1 and eth2. Create a virtual interface named bond0 using the following commands:

# Create bond0 interface with optimized parameters

nmcli connection add type bond con-name bond0 ifname bond0 bond.options "mode=4,miimon=100,lacp_rate=1,xmit_hash_policy=layer3+4"

# Add physical cards to the group

nmcli connection add type ethernet slave-type bond con-name bond0-eth1 ifname eth1 master bond0

nmcli connection add type ethernet slave-type bond con-name bond0-eth2 ifname eth2 master bond0

# Set static IP

nmcli connection modify bond0 ipv4.addresses 192.168.1.100/24 ipv4.gateway 192.168.1.1 ipv4.method manual

# Activate the system

nmcli connection up bond0Quick Tip: Use xmit_hash_policy=layer3+4. This option helps balance traffic based on both IP and Port, maximizing bandwidth utilization of both links instead of just relying on the MAC address by default.

3. Configuring on Ubuntu with Netplan

Open the file /etc/netplan/01-netcfg.yaml and edit the content as follows:

network:

version: 2

ethernets:

eth1: {}

eth2: {}

bonds:

bond0:

interfaces: [eth1, eth2]

addresses: [192.168.1.100/24]

routes:

- to: default

via: 192.168.1.1

parameters:

mode: 802.3ad

mii-monitor-interval: 100

lacp-rate: fast

transmit-hash-policy: layer3+4Debugging Experiences from Sleepless Nights

My most memorable mistake was encountering a packet loss rate of about 3% only during peak hours. All metrics seemed fine, but the system became flaky every night at 10 PM.

After careful inspection, I discovered one network cable had a minor physical fault, causing the interface to flap (up/down) within milliseconds. Since the miimon=100 configuration was too sensitive, the Bond constantly removed and re-added that network card, causing instability.

To check the LACP status most accurately, use the command:

cat /proc/net/bonding/bond0Look at the Partner Mac Address line. If you see the value 00:00:00:00:00:00, your Switch is definitely misconfigured or not recognizing the LACP protocol.

Quick Checklist for Success:

- Always check with

ethtool eth1to ensure network cards are running at the same Speed and Duplex. - Prioritize using

layer3+4for Web or Database services to optimize load balancing. - A low-quality cable can drag down the performance of the entire LACP cluster; don’t hesitate to replace it with a new one.

Implementing LACP is not just about aggregating bandwidth. It’s how you build a solid shield for your services against unpredictable infrastructure failures.