Why the Prometheus Pull Model Fails with Batch Jobs?

If you’ve worked with Prometheus, you’re well aware that its core mechanism is the Pull model. The server periodically visits exporters to collect data. However, trouble begins when your system involves “ephemeral” tasks—short-lived jobs that appear and disappear quickly. For instance, a log-cleaning CronJob that runs for only 3 seconds, or a database backup script that finishes in less than a minute at 2 AM.

In the past, I struggled with backup jobs failing while Grafana still showed green. The reason was simple: Prometheus scrapes every 15-30 seconds by default. If your job starts and finishes (or crashes) entirely between two scrape cycles, Prometheus is completely “blind” to it. To Prometheus, that job never even existed.

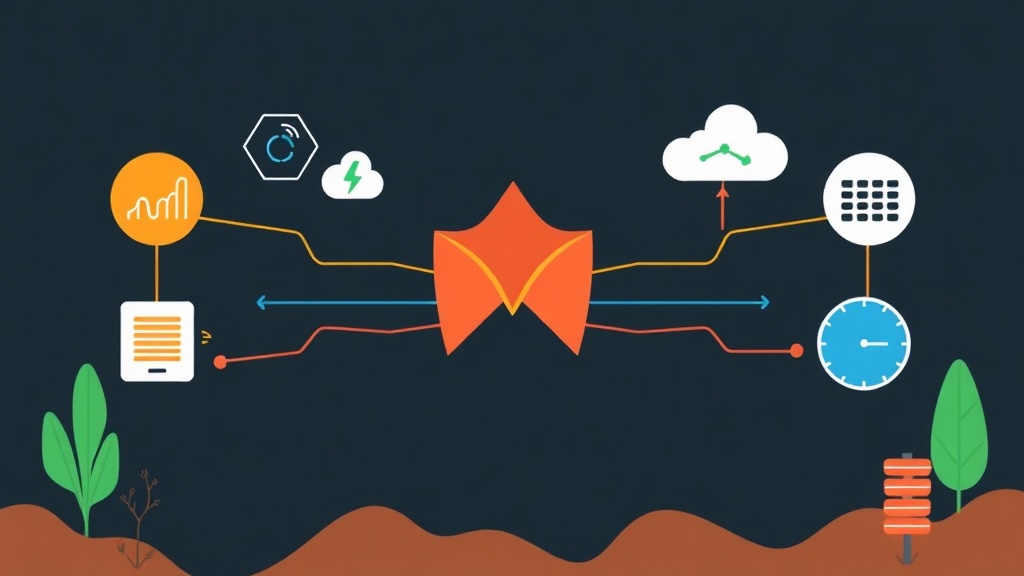

Prometheus Pushgateway was created to fill this gap. Think of it as an intermediary “mailbox.” Instead of waiting for Prometheus to pull data, short-lived jobs actively push metrics into the Pushgateway and then exit. Prometheus then simply stops by this “mailbox” to collect the data whenever it’s ready.

Three Ways to Monitor Short-lived Jobs: Which Should You Choose?

Before settling on the Pushgateway approach, I tried a few other methods and came up with this practical comparison:

- Forcing Scrape Interval down to 1 second: Sounds okay, but the server’s CPU will “scream” from overload. In reality, if a job runs for 0.5 seconds, you still have a 50% chance of losing the data entirely.

- Building a Dedicated Exporter: Too heavy for a 10-line Bash script. You’d have to maintain a background web service just to expose metrics.

- Using Pushgateway: The optimal solution. The script just needs a simple

curlcommand, and the data is safely on the dashboard.

Warning: “Death Traps” After 6 Months in Production

Pushgateway is powerful, but it’s not a magic wand. After half a year of operating a system with over 200 CronJobs, I’ve identified several critical weaknesses:

Great Advantages

- Absolute Flexibility: Anything that can send an HTTP request works, from Shell scripts to Java.

- Clean Jobs: They shut down after running, consuming no resources to maintain ports or services on the job server.

Disadvantages and Risks (Pay Close Attention)

- Single point of failure: If the Pushgateway goes down, all batch jobs disappear from the radar. You must monitor it strictly.

- The “Stale metrics” Trap: This is the most frustrating part. Pushgateway doesn’t automatically delete data. If a job reports

status=1(success) today, but fails to run tomorrow, Pushgateway keeps that “1”. Prometheus will falsely report that everything is fine. - Lack of “up” metric: You can’t use the

upfunction to check the job’s health like you can with Node Exporter.

Deploying Pushgateway in 5 Minutes

Using Docker is the fastest way to integrate Pushgateway into your system without cluttering the OS.

1. Initialization with Docker Compose

Create a docker-compose.yml file with a minimal configuration:

services:

pushgateway:

image: prom/pushgateway:v1.9.0

container_name: pushgateway

ports:

- "9091:9091"

restart: alwaysRun the command docker-compose up -d. Access http://your-ip:9091 immediately to check the management interface.

2. Pushing Data from a Real Script

Suppose you have a 500GB database backup script. We need to monitor its size and status.

Bash Example (Using curl)

# Push metrics with detailed labels

cat <<EOF | curl --data-binary @docs/ITFROMZERO-AutoContent-Documentation.md http://localhost:9091/metrics/job/backup_db/instance/prod_server

# HELP db_backup_size_bytes Backup file size

# TYPE db_backup_size_bytes gauge

db_backup_size_bytes 536870912000

# HELP db_backup_status 1=success, 0=failure

db_backup_status 1

EOFPython Example (Using prometheus_client)

from prometheus_client import CollectorRegistry, Gauge, push_to_gateway

registry = CollectorRegistry()

g = Gauge('job_last_success_time', 'Unix timestamp of the last successful job run', registry=registry)

g.set_to_current_time()

# Push metrics to the gateway

push_to_gateway('localhost:9091', job='daily_report', registry=registry)3. Configuring Prometheus Scrape

Don’t forget to edit your prometheus.yml file. Here is the standard configuration:

scrape_configs:

- job_name: 'pushgateway'

honor_labels: true

static_configs:

- targets: ['localhost:9091']Pro tip: You must set honor_labels: true. Without it, Prometheus will overwrite the labels you carefully set with Pushgateway’s default labels.

Vital Lessons from Operation

To avoid being misled by your dashboard, follow these two golden rules:

Always Use Success Timestamps: Instead of just looking at the 0/1 status, push an additional metric: job_last_success_unixtime. In Grafana, use the formula time() - job_last_success_unixtime. If this number exceeds 24 hours, you’ll know for sure that last night’s backup job had an issue.

Regular Cleanup: For jobs that generate dynamic labels (like random IDs), Pushgateway will grow very quickly and can consume GBs of RAM. Use a script to call the DELETE API to clean up expired metrics.

# Delete all data for a job when no longer needed

curl -X DELETE http://localhost:9091/metrics/job/backup_dbSince implementing this combination, I no longer have to stay up late checking logs or SSHing into every server. In the morning, I can just enjoy a cup of coffee and glance at the dashboard to see the health of the entire system. If you’re managing dozens of critical CronJobs, deploy it now!