Getting Started: 5 Minutes to Dive In

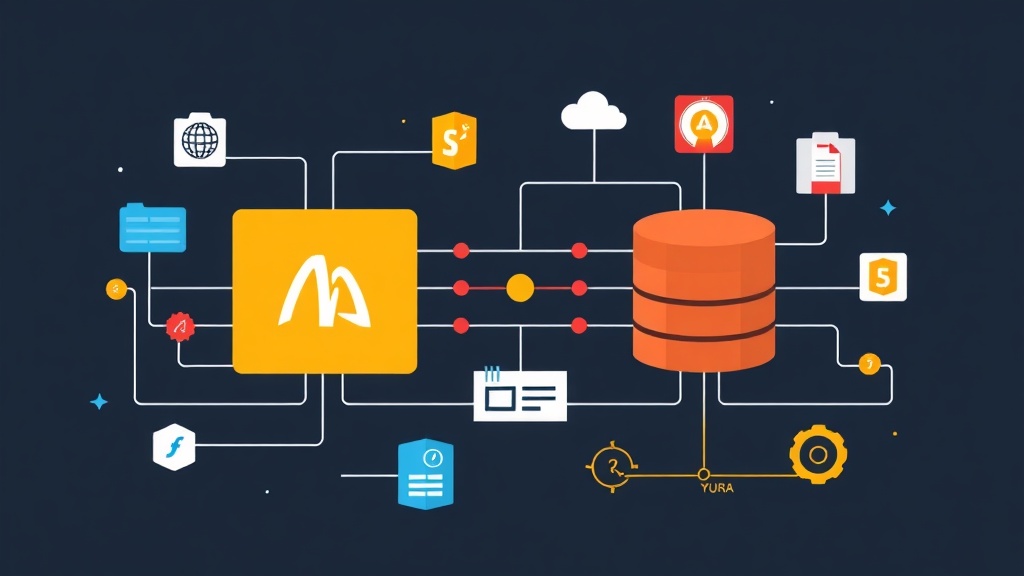

Suppose you are managing two Masters (Source A and Source B) and need to pipe all data to a single Slave (Replica) for reporting. Instead of wrestling with code, leverage the FOR CHANNEL keyword. This is the “key” for MySQL to distinguish between different data streams.

Once you have created the replication users on the Masters, simply load the configuration on the Replica with these high-value commands:

-- Configure channel from Source A (Example: DB Orders)

CHANGE REPLICATION SOURCE TO

SOURCE_HOST='192.168.1.10',

SOURCE_USER='repl_user',

SOURCE_PASSWORD='super_secure_password',

SOURCE_LOG_FILE='mysql-bin.000001',

SOURCE_LOG_POS=156

FOR CHANNEL 'source_A';

-- Configure channel from Source B (Example: DB Users)

CHANGE REPLICATION SOURCE TO

SOURCE_HOST='192.168.1.20',

SOURCE_USER='repl_user',

SOURCE_PASSWORD='super_secure_password',

SOURCE_LOG_FILE='mysql-bin.000005',

SOURCE_LOG_POS=450

FOR CHANNEL 'source_B';

-- Activate both streams in parallel

START REPLICA FOR CHANNEL 'source_A';

START REPLICA FOR CHANNEL 'source_B';To check if everything is running smoothly, run SHOW REPLICA STATUS\G. If both channels show Running: Yes, congratulations—the foundation is set!

A Real-World Story: When 5 Microservices Need to ‘Meet’

Back when I worked at an e-commerce startup, the system had 5 microservices running on 5 separate databases. One fine day, the boss asked for a real-time revenue dashboard by 8 AM the next morning. At that moment, the thought of writing code to join 5 remote databases made me break a cold sweat due to the latency and complexity.

The lifesaver then was MySQL Multi-Source Replication. Instead of using bulky ETL tools, I synchronized all 5 data sources (totaling about 200GB) directly to a central Replica with 16GB of RAM. The results were surprising:

- High-speed reporting: Query freely on the Replica without affecting the order speed on the Master.

- Effortless backups: Instead of running 5 backup jobs, I just consolidated everything and backed it up once.

- Multi-region analysis: Aggregating data from Singapore and US servers back to Vietnam with only 100-200ms latency.

Detailed Configuration: Precision Matters

To keep the system stable in production, I highly recommend: Use GTID (Global Transaction Identifiers). Avoid traditional binlog_file methods unless you enjoy staying up all night fixing errors whenever a Master fails.

1. Optimizing my.cnf

Open your configuration file and ensure the following parameters are present:

[mysqld]

server-id = 101 # Unique ID for each server

gtid_mode = ON

enforce_gtid_consistency = ON

log_bin = /var/log/mysql/mysql-bin.log

log_slave_updates = ON # Necessary if you want this Replica to serve as a source for other nodes2. Securing the Replication User

Never use the root user. Create a dedicated user with minimal required privileges to reduce security risks:

CREATE USER 'repl_user'@'%' IDENTIFIED WITH mysql_native_password BY 'super_secure_password';

GRANT REPLICATION SLAVE ON *.* TO 'repl_user'@'%';

FLUSH PRIVILEGES;3. Tips for Using Filters

Typically, you only need a few important databases rather than the entire server. For example: Source A pulls db_orders, while Source B pulls db_users. Configure filters directly to avoid data overlap:

CHANGE REPLICATION FILTER REPLICATE_DO_DB = (db_orders) FOR CHANNEL 'source_A';

CHANGE REPLICATION FILTER REPLICATE_DO_DB = (db_users) FOR CHANNEL 'source_B';Pitfalls I’ve Experienced

The configuration might look smooth, but when real-world data starts flooding in (around 10 million records per day), you’ll encounter some painful issues.

Primary Key Conflict

This is the classic error. If both Source A and Source B create a user with ID 1, when they reach the Replica, the system will throw a Duplicate entry error and stop immediately.

How I handled it:

- Switch all Primary Keys to UUIDs instead of Auto Increment.

- If you are already using Auto Increment, configure different

auto_increment_offsetvalues (e.g., odd numbers for Source A, even numbers for Source B). - Or the simplest way: Map each Source to a separate Schema (Database) on the Replica.

Handling Slave Lag

When handling data from multiple sources, the Replica can easily get overwhelmed, causing the dashboard data to become stale. I optimized this by enabling Multi-Threaded Slave (MTS) in MySQL 8.0. For a 16-core server, setting 8 workers is the sweet spot:

set global slave_parallel_type = 'LOGICAL_CLOCK';

set global slave_parallel_workers = 8; This configuration reduced latency from 30 minutes to under 1 second. Watching the dashboard numbers update in real-time is incredibly satisfying!

Final Thoughts from the Server Room

After years of operation, I’ve learned some hard lessons: always monitor relay logs to avoid filling up the disk, and use Percona XtraBackup instead of standard mysqldump. If you’re planning to build a centralized data warehouse, MySQL Multi-Source is definitely the first tool you should consider. If you have any configuration issues, feel free to drop me a message in the comments!