Building AI Without Observability Is a Mistake

If you’re building applications with LangChain, you’ve likely encountered a bot suddenly giving “clueless” answers. Even worse, some chains might take 20 seconds to run without you knowing where the bottleneck is. From many real-world projects, I’ve realized one thing: Observability is a survival skill. If you can’t see what’s happening inside those abstract layers of code, debugging is like looking for a needle in a haystack.

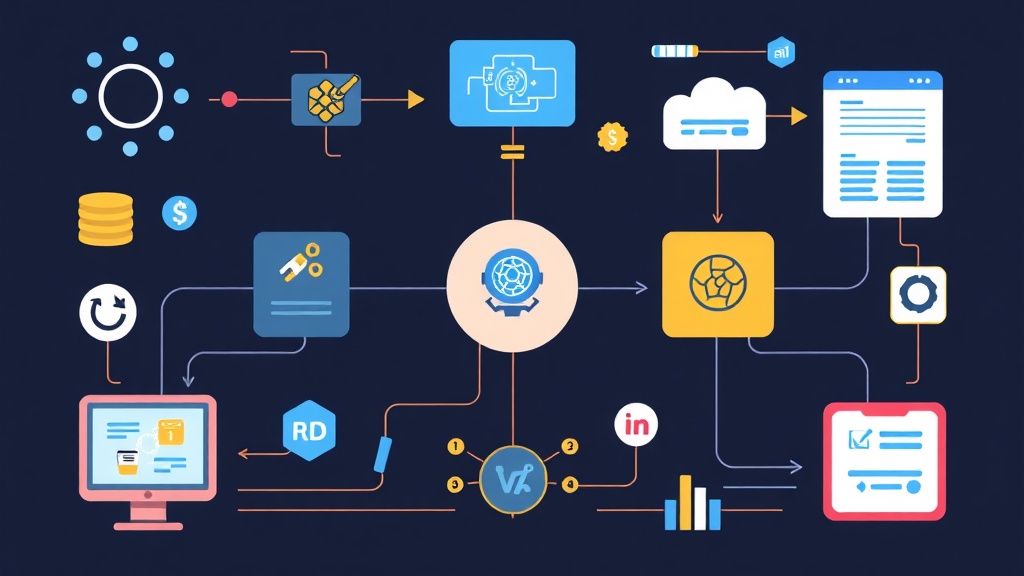

LangSmith was created to eliminate that ambiguity. It’s not just a simple logging tool but an entire ecosystem for tracing, evaluating, and managing prompts. In this article, I’ll show you how to integrate LangSmith into your project in a heartbeat, along with practical experience for taking AI to production.

Quick Start: See Results in 5 Minutes

The good news is that you don’t need a complex server setup. LangSmith provides a very smooth cloud version ready for immediate use.

Step 1: Get an API Key

Visit smith.langchain.com to sign up for an account. Go to Settings, create a new API Key, and make sure to copy it immediately as it won’t be shown again.

Step 2: Configure Environment Variables

LangChain is incredibly smart as it automatically detects configuration via environment variables. Simply add these lines to your .env file or enter them directly into your terminal:

export LANGCHAIN_TRACING_V2=true

export LANGCHAIN_ENDPOINT="https://api.smith.langchain.com"

export LANGCHAIN_API_KEY="lsv2_pt_..."

export LANGCHAIN_PROJECT="itfromzero-demo" # Project name for easy managementStep 3: Run the Code

The biggest plus is that you don’t have to modify a single line of logic. LangChain will automatically “send” data to the dashboard. Try this simple code snippet:

import os

from langchain_openai import ChatOpenAI

from langchain_core.prompts import ChatPromptTemplate

model = ChatOpenAI(model="gpt-4o-mini")

prompt = ChatPromptTemplate.from_template("Explain {topic} for beginners.")

chain = prompt | model

response = chain.invoke({"topic": "LangSmith Tracing"})

print(response.content)Now, open the LangSmith dashboard. A new “Run” has appeared with full details from input and output to specific response times.

Understanding Traces: Don’t Let Data Mislead You

The LangSmith interface uses a Tree view where each node is a Span. For complex RAG (Retrieval-Augmented Generation) applications, this diagram is invaluable:

- Actual Input/Output: You can see exactly what the final prompt sent to OpenAI looks like. Sometimes errors occur just because of a space or a variable injected in the wrong format.

- Measuring Latency: How many milliseconds did each step take? Example: Retrieval might take only 200ms, but the LLM “hangs” for 10s. You’ll know exactly where to optimize.

- Token Control: Monitor costs in real-time. I once discovered an infinite loop bug that drained $50 in credits overnight by noticing an abnormal spike in the token graph.

Advanced: Automated Testing Instead of Manual Input

Writing the code is just the first step. How do you know if a new prompt is actually better than the old one? Instead of manually typing every query, I often use the Datasets & Testing feature.

You can group sample questions into a Dataset and then run batch tests. LangSmith allows you to use AI itself (e.g., GPT-4) to grade the smaller model’s answers based on criteria like accuracy, helpfulness, or JSON format. This approach gives our team the confidence to merge code without worrying about degrading the bot’s response quality.

Practical Experience from a DevOps Perspective

When deploying to a system with real users, keep these 4 points in mind:

1. Separate Environments Clearly

Don’t dump all data into a default project. Use LANGCHAIN_PROJECT to separate dev, staging, and production. This helps you avoid noise when filtering for error data.

2. Careful with Sensitive Data (PII)

LangSmith stores the entire chat history. If users enter emails or credit card numbers, they’ll be visible on the dashboard. Configure hide_inputs in your code to ensure customer data security.

3. Sampling Techniques for Production

Tracing everything in a production environment with 10,000 daily users can be expensive and increase network latency. Instead, use Sampling: capturing about 5-10% of random traces is usually enough to monitor system health.

4. Leverage the Feedback Loop

If a user clicks “Dislike,” use the LangSmith SDK to attach a score=0 tag to that specific run_id. At the end of the week, just filter for low-scored cases for analysis. This is the fastest way to improve prompts based on real feedback.

Conclusion

Building AI without monitoring tools is like driving through thick fog. LangSmith might feel overwhelming due to its many features, but simply turning on Tracing puts you far ahead of many peers.

Don’t hesitate to try it in your current project. Trust me, you’ll find “clueless” bugs that a standard print() statement would never catch. If you face any setup issues, feel free to leave a comment, and I’ll support you right away.