The Nightmare of a ‘3 AM Database Crash’

Imagine this: It’s 3 AM, and your phone is vibrating uncontrollably. The Primary database is down, your boss is calling incessantly, and customers are complaining on every front. Early in my career, I once spent an entire hour SSH-ing into servers just to check which nodes were still alive. Then came a series of risky manual steps: typing SELECT pg_promote(); and rushing to update the application configuration to point to a new IP. A single slip-up was enough to make data vanish forever.

If you have mastered PostgreSQL Streaming Replication, you already have a data backup. But replication alone isn’t enough. It cannot automatically detect failures or perform a failover when an incident occurs. That is why Patroni was created—to shoulder all of that heavy and stressful work.

Three Common Approaches to High Availability (HA)

Before choosing Patroni, let’s look back at the methods tech professionals often use when a Primary node ‘dies’:

1. Manual Failover

- How it works: An admin directly intervenes to upgrade a secondary node.

- Pros: Absolute control, avoids accidental node switching.

- Cons: Far too slow. Downtime lasts as long as it takes for you to wake up and log in.

2. Custom Scripts

- How it works: Using Bash or Python to constantly ping the Primary and automatically promote a Standby.

- Pros: Faster response than a human.

- Cons: Extremely dangerous due to Split-brain scenarios. When two nodes both think they are the Primary and write data simultaneously, your database will be corrupted.

3. Patroni – The Current Gold Standard

- How it works: Centralized management through a ‘shared ledger’ (a DCS like ETCD or Consul).

- Pros: Fully automated, prevents Split-brain using consensus algorithms, and supports a great REST API.

- Cons: Requires extra time for initial configuration research.

Why Patroni is the Top Choice for Large-Scale Projects

I once deployed a system for an e-commerce platform with over 15,000 concurrent users. The requirement was strict: downtime could not exceed 30 seconds. After testing Repmgr and Patroni, I chose Patroni thanks to its Distributed Configuration Store (DCS) mechanism.

Think of ETCD as a powerful referee. Patroni on each node constantly sends a ‘heartbeat’ signal to the referee. If the Primary remains silent for more than 10 seconds, the referee immediately orders the remaining nodes to elect a new Leader. This process happens transparently, accurately, and without human intervention.

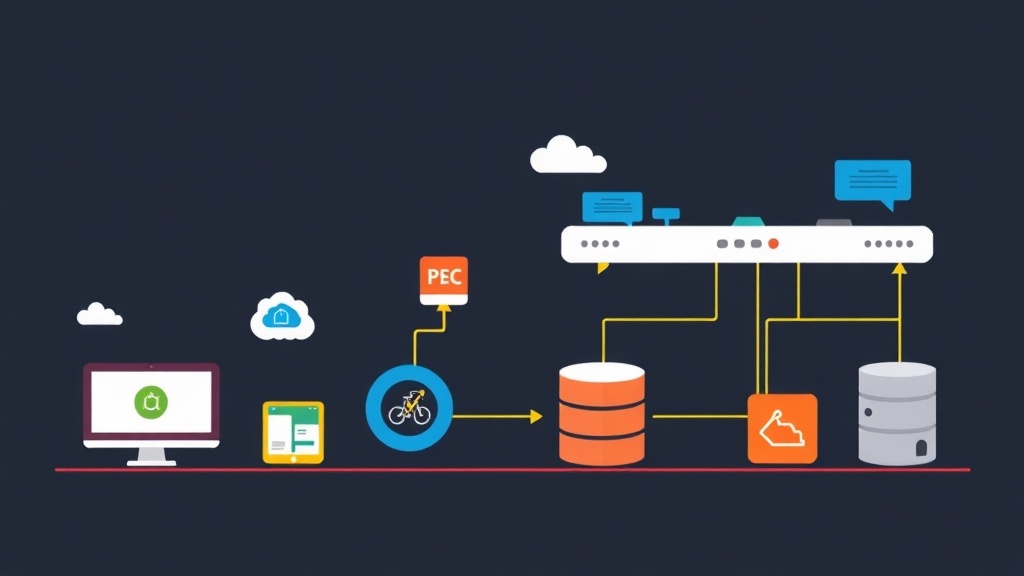

Standard Architecture of a Patroni Cluster

A real-world model I often set up includes:

- PostgreSQL: The main character storing the data.

- Patroni: The ‘bodyguard’ running alongside each PostgreSQL instance.

- ETCD: The place that stores the vital status of the entire cluster (Cluster state).

- HAProxy & Keepalived: Provides a single Virtual IP. The application only needs to connect to this IP; HAProxy will automatically know which node is currently the Primary to route the traffic.

Detailed Deployment Guide

Suppose you have 3 Ubuntu servers. We will focus on installing Patroni and ETCD to create the backbone of the system.

Step 1: Setting up ETCD (The State Store)

Install etcd on all 3 nodes:

sudo apt-get update

sudo apt-get install etcd -yEdit /etc/default/etcd so the nodes can see each other. This is a vital step to create consensus within the cluster.

Step 2: Installing PostgreSQL and Patroni

Important Note: Do not initialize the database using initdb. Let Patroni handle that to ensure consistency.

sudo apt-get install postgresql-15 python3-pip -y

sudo pip3 install patroni[etcd]Step 3: Configuring the ‘Heart’ – patroni.yml

Here are the key parameters you need to note:

scope: postgres-cluster

name: pg-node-1 # Set a unique name for each node

dcs:

ttl: 30 # Leader key retention time

loop_wait: 10

retry_timeout: 10

etcd:

hosts: 10.0.0.1:2379,10.0.0.2:2379,10.0.0.3:2379

postgresql:

listen: 0.0.0.0:5432

data_dir: /var/lib/postgresql/15/main

bin_dir: /usr/lib/postgresql/15/bin

authentication:

replication:

username: replicator

password: your_passwordStep 4: Activating the System

Launch Patroni with the following command:

patroni patroni.ymlThe first node will automatically initialize the database and become the Leader. Subsequent nodes, once turned on, will automatically run pg_basebackup from the Leader. You don’t need to type any manual backup or restore commands. Extremely convenient!

Testing Failover: The Moment of Truth

Now is the time to check the results. Use the patronictl command to view the status:

patronictl -c patroni.yml listYou will see a table appear with one Leader node and several Replica nodes. Now, try ‘pulling the plug’ by stopping the service on the Leader node:

sudo systemctl stop patroniAfter about 5-10 seconds, look at the logs of the remaining nodes. A Replica will automatically be promoted to Leader. When you turn the old node back on, it will realize it has been ‘deposed’ and quietly return as a Standby.

Real-World Lessons from the Trenches

Operating Patroni in practice has a few ‘thorny’ points you should remember:

- HAProxy is Mandatory: Patroni does not automatically change the IP for the application. You need HAProxy to call Patroni’s REST API (port 8008) to check which node is the actual master.

- Watchdog Configuration: Use Linux Watchdog to automatically reboot the server if Patroni hangs. It helps completely eliminate ‘zombie’ nodes that cause interference in the cluster.

- Sync Bandwidth: If your DB is larger than 500GB, use a 10Gbps network. When a new node joins the cluster, it will pull a massive amount of data, which can easily congest the current Leader’s network.

Mastering Patroni not only levels up your system but also helps you get a better night’s sleep. Instead of being on call 24/7, let automation handle the burden for you.