Why You Shouldn’t Stick with Local Storage Forever

Installing Proxmox on a single server and storing VMs on local drives is the fastest way to get started. However, risk strikes the moment hardware fails. If the server dies, all your virtual machine data is trapped inside. Expanding capacity is also a headache since the number of drive slots on a single node is always limited.

In my home lab with 12 VMs, using local SSDs once cost me nearly 5 hours to restore data after a controller failure. To deploy features like Live Migration (moving VMs without downtime) or High Availability (automatic recovery when a host fails), a Centralized Storage system is a prerequisite.

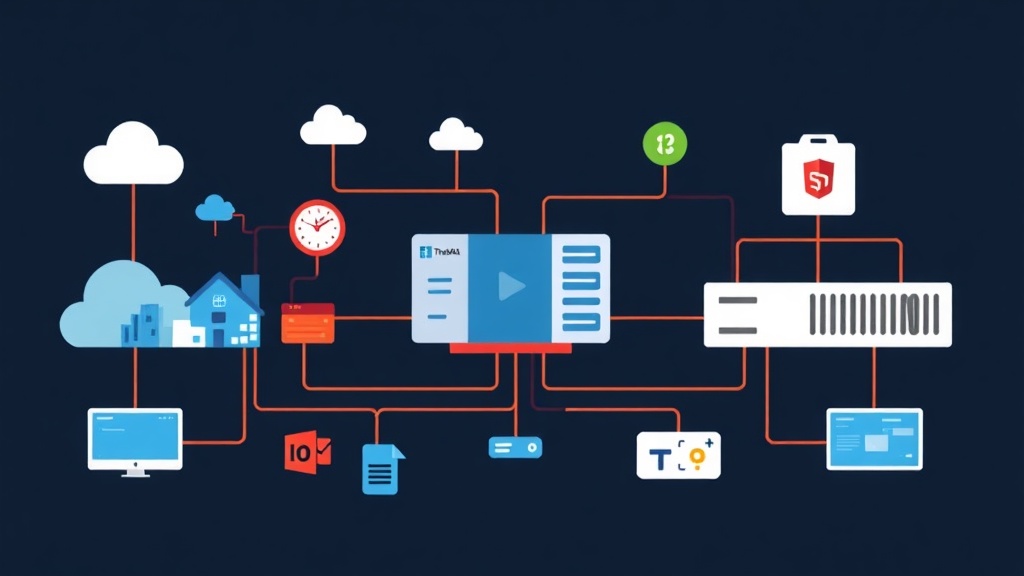

TrueNAS is the perfect solution to solve this problem. We will configure the two most popular protocols for connecting Proxmox and TrueNAS: NFS and iSCSI.

NFS and iSCSI: Which Is the Right Choice?

Each protocol has its own strengths. Understanding their core nature will help you optimize your system better.

1. NFS (Network File System) – Simple and Flexible

NFS operates at the File-level. It functions like a shared folder over the network where Proxmox stores .qcow2 files.

- Pros: Extremely fast configuration, allows simultaneous access from multiple hosts, and easy direct file management.

- Cons: Performance is typically 10-15% lower than iSCSI due to filesystem overhead.

2. iSCSI (Internet Small Computer System Interface) – Speed and Power

iSCSI operates at the Block-level. This protocol tricks Proxmox into thinking it is connected to a physical hard drive via a LAN cable.

- Pros: Superior throughput, extremely low latency, suitable for Databases or heavy applications.

- Cons: The setup process involves multiple steps (Target, Portal, LUN) and makes it difficult to manage individual files from the storage side.

Practical Advice: Use NFS for storing ISO files and Backups. For VM operating system disks that require high speed, prioritize using iSCSI combined with LVM.

Step 1: Configuration on the TrueNAS Side

Before starting, ensure you have already created a Storage Pool on TrueNAS (Core or SCALE).

Configuring NFS

First, create a new Dataset named proxmox-nfs. Then, navigate to Shares -> Unix Shares (NFS) and click Add. Select the path to the Dataset you just created.

Important Note: Under Advanced Options, set Maproot User to root and Maproot Group to wheel. Without this step, Proxmox will face “Permission Denied” errors when trying to write files.

Configuring iSCSI

The iSCSI process is slightly more complex; you must follow the sequence exactly:

- Create Zvol: Under Datasets, select Add Zvol (e.g., 500GB). This will be the raw partition provided to Proxmox.

- Set up Portal: In Shares -> Block (iSCSI), add a Portal with the TrueNAS IP.

- Configure Target: Create a new Target to identify the storage system.

- Create Extent: Point the Extent to the Zvol created in step 1.

- Link: In the Associated Targets section, connect the Target and Extent together.

Step 2: Connecting from the Proxmox VE Interface

Open your browser and log in to your Proxmox node (usually on port 8006).

Adding NFS Storage

Navigate to Datacenter -> Storage -> Add -> NFS. Enter a descriptive name in the ID field and the TrueNAS IP in the Server field. When you click on the Export field, Proxmox will automatically list the available paths. Don’t forget to select ISO Image and VZDump in the Content section.

Adding iSCSI and LVM

For iSCSI, we need to perform two stages so that virtual machines can use it.

Stage 1: Connecting the Target

Go to Add -> iSCSI and enter the TrueNAS IP in the Portal. In the Target field, click search to select the correct configured IQN. Uncheck “Use LUNs directly” so we can manage it via LVM in the next step.

Stage 2: Creating the LVM Layer

Now, go to Add -> LVM. Select the Base storage as the iSCSI connection you just created. Name the Volume Group and select Disk Image for Content. iSCSI is now ready for you to create new virtual machines.

Checking Connection Status

If the storage shows a red X, SSH into Proxmox to check via command line. The command iscsiadm -m discovery -t sendtargets -p [TRUENAS_IP] will help you confirm if the server sees the Target. Additionally, use lsblk to check if the new drive appears in the device list.

A Warning About Bottlenecks

Network storage depends entirely on bandwidth. A 1Gbps port only reaches a maximum speed of about 110-125MB/s. If running 5-7 VMs simultaneously, the system will become very laggy.

If possible, invest in a used 10Gbps card (like the Mellanox ConnectX-3) for a low cost. Separating Storage Traffic from VM Network traffic will ensure the system runs extremely smoothly.

Conclusion

Decoupling Storage and Compute is a turning point in making your system more professional. NFS provides convenience for file storage, while iSCSI ensures performance for critical applications. Start with NFS to get familiar, then upgrade to iSCSI as your processing needs grow. Good luck with your setup!